Back to the technical posts…

I’m going to take an example of a very simple execution plan that I used in a presentation a few weeks back and go over it in some detail, pointing out information that is available and what can be read from the plan.

The execution plan can be downloaded (in xml format) here – ExecPlanDem01.zip

The query that the plan came from is a very simple two table query. It’s not a very optimal plan, but that’s because I forced an index hint in order to generate a more interesting plan. Without the hint, it’s a simple exec plan with two index seeks and a nested loop join.

Overview

The first thing that can be seen from the execution plan is that most of the cost of the query is in two operations, a key lookup (formerly called a bookmark lookup) and a clustered index seek. The high cost of the key lookup is a good sign that the query is using an inappropriate index. (in a future post I’ll discuss using the exec plan to choose indexes)

The second thing that can be seen is that there is a filter operator. This again suggests that there is not an appropriate index, as this plan means that SQL will first select rows from the table and later filter out rows that aren’t needed.

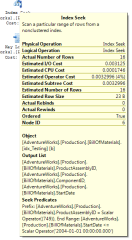

Index seek

The first thing to notice about the index seek is that there are two seek predicates, one an equality, one an inequality. Having them both as seek predicates indicated that the columns in this index are in the correct order to support the two predicates. (I’ll do an entire post sometime on index key column ordering)

The second thing to note is that the estimated and actual rows are identical. This is good, it indicates that the statistics on this index are up to date and that the optimiser managed to get an accurate estimate of the rows that would be returned.

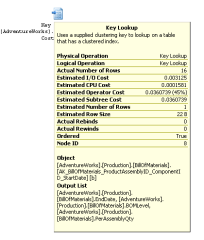

Key Lookup

The presence of a key lookup operator indicates that the nonclustered index that was used to locate the rows affected by the query did not have all the columns required by the query. The missing columns must be looked up from the clustered index. The output list shows what columns were fetched by the key lookup. This can help when altering indexes to get a covering index.

The presence of a key lookup operator indicates that the nonclustered index that was used to locate the rows affected by the query did not have all the columns required by the query. The missing columns must be looked up from the clustered index. The output list shows what columns were fetched by the key lookup. This can help when altering indexes to get a covering index.

One important thing to note is that the estimated rows for this operator is 1. That’s not a cardinality problem, it’s because the estimated rows is per execution of the operator and the actual rows is total over all executions. The number of times an operator is executed is stored within the XML, however the SQL 2005 management studio doesn’t show it. Management studio in SQL 2008 does.

This shows one of the reasons why the key lookup can be a bottleneck. For each row returned by the index seek, the key lookup does a clustered index seek returning a single row. For a small number of rows, that’s not too much or a problem, for hundreds of rows it can be a major bottleneck in the query.

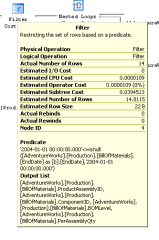

Filter

The last interesting part of the execution plan is the filter.

Having a filter in the exec plan indicates that there was some condition that could not be evaluated as part of the index seek/scan. This may be because in involves an aggregate, because it involves columns in multiple tables or, as in this case, because the column was not part of the index and had to be looked up separatly.

As with the index seek, the estimated and actual row counts are very similar, indicating that the cardinality estimate is accurate for this query.

So, that’s most of the interesting things that can be gleaned from the exec plan. I have a couple more posts planned in the series on reading exec plans, one showing a more complex plan and the other going into more detail on the % costs that the plan shows.

That’s all for now. I’ll see if ‘i can dig up a more complex plan to work through.

A fantastic blog – “How to glean interesting things from execution plan ?”

If the key lookup returned a huge number of rows the cost can also be reduced by rewriting the filter: “‘2004-01-01 00:00:00.000′<=isnull(b.EndDate,’2004-01-01 00:00:00.000’)”

as: “(‘2004-01-01 00:00:00.000′<=b.EndDate or b.EndDate is null)”

because isnull is a function that will be executed for every row in the index and the second will only select the rows that are true.

Unless your example is for bad where clauses, it is a good practice NEVER to put functions in where clauses.

Hehe. That query comes straight out of the Adventureworks database. It wasn’t an example of a good or a bad query, it was an example of how to read an execution plan.

The key lookup’s rows couldn’t be reduced in this case, because the column EndDate isn’t in the nonclustered index, so the key lookup has to be done before the filter on EndDate (in either the original or the revised form) can be applied.

As for functions in a where clause – https://www.sqlinthewild.co.za/index.php/2007/07/19/functions-in-a-where-clause/

This is good news, I

Pingback: Recent Links Tagged With "cardinality" - JabberTags

Gali, I read in msdn forums that Bookmark Lookup(SQL 2000 or before)=Clustered Index seek (SQL 2005 RTM & SP1) = Key Lookup (SQL 2005 SP2 & later)..then how come we have both Key Lookup & Clustered Index Seek in the same exuection plan?

A clustered index seek is a legitimate operator. It’s, as it’s name says, a seek on a clustered index. In 2005 RTM and SP1 both true Clustered index seeks and bookmark lookups appeared in the plan as clustered index seeks. In SP2 onwards, the lookups are key/RID lookups and the clustered index seeks in the plan are actual clustered index seeks.

Thanks Gali for clarification. I’m gaining so much good info from all of your posts.

Gail,

Thanks for clearing up the fact that there is no cardinality error in the estimate of the key lookup (1 vs 16). With no visibility of the number of executions it would be all too easy to waste time investigating statistics that are neither missing nor stale.

Excellent blog, keep up the good work!

John, for a more detailed look at that, try this post: https://www.sqlinthewild.co.za/index.php/2009/09/22/estimated-rows-actual-rows-and-execution-count/