All indexes are unique

Well, that’s a rather contentious title. There are probably several people shaking their heads at this point. Let me explain.

I was listening to a podcast with Kimberly Tripp this morning, and she mentioned this briefly. I thought it would be a good discussion to end a short series on indexes and selectivity.

The Clustered Index

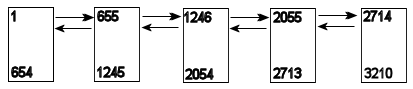

A clustered index has to be unique, because the clustering key acts as the row’s location in the table. If the index is not defined as unique, SQL will make it unique by adding a uniquifier, a 4-byte integer that’s hidden behind the scenes and is added when necessary to make the clustered index unique.

It’s not documented anywhere clearly, but it is mentioned in a couple of places. From msdn:

If the clustered index is not a unique index, SQL Server makes any duplicate keys unique by adding an internally generated value called a uniqueifier. This four-byte value is not visible to users. It is only added when required to make the clustered key unique for use in nonclustered indexes. SQL Server retrieves the data row by searching the clustered index using the clustered index key stored in the leaf row of the nonclustered index.

So all clustered indexes are unique.