Index columns, selectivity and inequality predicates

So, following on from my post last week, I’m going to take a look at how selectivity and index column order affect inequality predicates.

One thing to note straight off is that the selectivity of a column is much less important for inequality predicates than it was for equality. For equality predicates, the selectivity alone can give a reasonable idea of the number of rows a particular predicate will return. That’s not the case with inequalities. Also, with inequality predicates, the order of columns in the index becomes very important.

One of the most important considerations with inequality predicates is the number of rows that the predicate will return. An identity column may be highly selective, but if the filter is for all rows > 0 and the identity values start t one, then an index on that column is not going to be very useful.

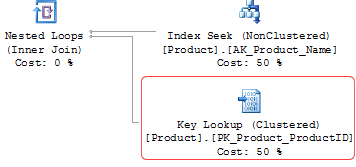

The other consideration when there are inequality predicates is that only that column and columns to the left of it in the index key can be used for index seeks. Any columns to the right of the column with the inequality is no longer eligible for seeking.

To explain with an example, consider our hypothetical table from the previous post (with one small change):

CREATE TABLE ConsideringIndexOrder ( ID INT, SomeString VARCHAR (100), SomeDate DATETIME DEFAULT GETDATE() );

The same as previously, there’s a single nonclustered index on all three columns, in the order ID, SomeDate, SomeString.

If there’s an inequality predicate, then then the index is only fully seekable for the following queries

… WHERE ID = @ID AND SomeDate = @dt AND SomeString > @str

… WHERE ID = @ID AND SomeDate > @dt

… WHERE ID > @ID