I see a lot of advice that talks about the clustered index been the best index for use for range queries, that is queries with inequalities filters, queries that retrieve ranges of rows, as opposed to singleton queries, queries that retrieve single rows (including, unfortunately, a Technet article).

I suspect the reasoning behind this advice is the idea that the clustered index stores the data in order of the clustering key (ack) and hence it’s ‘logical’ that such a structure would be best for range scans as SQL can simply start at the beginning of the range and read sequentially to the end.

Question is, is that really the case?

Let’s do some experiments and find out.

CREATE TABLE TestingRangeQueries ( ID INT IDENTITY, SomeValue NUMERIC(7,2), Filler CHAR(500) DEFAULT '' ) -- 1 million rows INSERT INTO TestingRangeQueries (SomeValue) SELECT TOP (1000000) RAND(CAST(a.object_id AS BIGINT) + b.column_id*2511) FROM msdb.sys.columns a CROSS JOIN msdb.sys.columns b -- One cluster and two nonclustered indexes on the column that will be used for the range filter CREATE CLUSTERED INDEX idx_RangeQueries_Cluster ON TestingRangeQueries (ID) CREATE NONCLUSTERED INDEX idx_RangeQueries_NC1 ON TestingRangeQueries (ID) CREATE NONCLUSTERED INDEX idx_RangeQueries_NC2 ON TestingRangeQueries (ID) INCLUDE (SomeValue) GO

The query that I’ll be testing with will do a sum of the SomeValue column for a large range of ID values. That means that of the three indexes that I’m testing, one is clustered, one is a nonclustered that does not cover the query and the third is a covering nonclustered index.

SELECT SUM(SomeValue) FROM TestingRangeQueries WHERE ID BETWEEN 20000 and 200000 -- 180 001 rows, 18% of the table

I’m going to run the same range scan query three times, each with an index hint so that SQL will use the three different indexes, regardless of which one it thinks is best.

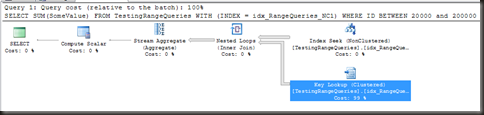

First up, the clustered index.

As expected, we get a clustered index seek (the predicate is SARGable) and a stream aggregate.

Table ‘TestingRangeQueries’. Scan count 1, logical reads 12023, physical reads 0.

SQL Server Execution Times:

CPU time = 94 ms, elapsed time = 110 ms.

So if the advice is correct, this should be the best (lowest CPU, lowest IO). Let’s see…

The first nonclustered index does not cover the query. Hence, seeing as this query returns a substantial portion of the table, we could assume that the optimiser probably wouldn’t chose to use it because of the cost of the key lookups. If that is the case, then if the query probably won’t be very efficient if I force the use of that index.

Table ‘TestingRangeQueries’. Scan count 1, logical reads 551413, physical reads 0.

SQL Server Execution Times:

CPU time = 562 ms, elapsed time = 560 ms.

Ow. Not very efficient at all. Those key lookups hurt.

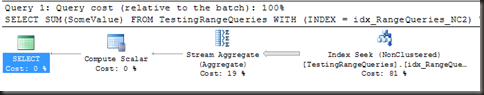

One last index to test, the covering non-clustered index.

Table ‘TestingRangeQueries’. Scan count 1, logical reads 338, physical reads 0.

SQL Server Execution Times:

CPU time = 62 ms, elapsed time = 67 ms.

2/3 the CPU usage of the query using the clustered index and about 3% of the reads. No question about it, this one’s faster and less resource intensive. That pretty much invalidates the claim that the clustered index is best for range queries.

So what’s going on here?

The technet article I linked to at the beginning of this post states the following as reasoning for recommending a clustered index for range queries:

After the row with the first value is found by using the clustered index, rows with subsequent indexed values are guaranteed to be physically adjacent.

Um, well, ignoring that there’s no guarantee of physical adjacency with an index at all, how does this differ from a nonclustered index?

In a clustered index, the leaf pages are logically ordered by the clustered index key (meaning that SQL can follow a page’s next page pointer to get the next page in the key order). To do a range query using the clustered index, SQL will seek down the b-tree to the start of the range and then read along the leaf pages, following the next page pointers, until it reaches the end of the range.

In a nonclustered index, the leaf pages are logically ordered by the index key (just the same as in a cluster). To do a range query using the nonclustered index, SQL will seek down the b-tree to the start of the range and then read along the leaf pages, following the next page pointers, until it reaches the end of the range. If additional columns are needed, SQL will then do a key/RID lookup for each row to retrieve the additional rows.

Not much difference there, other than the key lookups. So ‘physical adjacency’ is pretty much ruled out as a reason using the clustered index (if it was even true)

What is important, as we saw from the two tests of the nonclustered index, is that when the query is retrieving a significant portion of the table (and by ‘significant’ I mean more than about 1%), the index needs to be covering, or the cost of the key lookups becomes overwhelming. Hence, what we want for a range query is a covering index.

The clustered index is always covering, because it contains, at the leaf level, all the columns of the table. It is, however, the largest index on a table1. The larger the index, the less efficient that index becomes. Hence while the clustered index is good for a range query, it’s not the best possible index for a range query.

The best possible index for a range query is the smallest index that is seekable and covers the query (the same as for just about any other query).

Now it’s not always possible to cover a query, and some queries shouldn’t be covered. There will be times when the cluster is the best choice for range queries, either because of the number of columns required or because just about every query filters on a particular column and that column is a good choice for the cluster. Just don’t make the mistake of thinking it’s the only choice.

(1) It is possible to have a nonclustered index that’s larger than the clustered index. Takes some work though and is far from a usual case.

Pingback: @SQLintheWild posts Is a clustered index best for range queries? | sqlmashup

I wish I could use covered queries, but my .NET guys and I are preparing to role out a new production system that has their Containers doing SELECT * (because they need to bring back every single column, every single time). The only exception to this is my reports guy and his queries from his reports, but those are very long aggreagates (that I finally convinced him to do with an INNER JOIN instead of a WHERE statement), with multiple tables – at least x12 of the 30+ in the db.

So – for me – Clustered Indexes is the only way I can go (for now).

Great article and please keep up the great work!

I smell an ORM….

Get article, and better I understand everything you are saying. About time.

Thomas

Great article. I suspect that the advice that Clustered Indexes are best comes from the assumption that you won’t have a smaller covering index – and in many real world scenarios that will be the case. But where it is possible, then the non-clustered covering index wins.

Hi Gail

The logic is flawed because the clustered index contains three columns and the cover index contains two. And if you take into account the Filler column that is CHAR 500 then obviously then the clustered index is raeding more data to satisfy the query. So if you compare apples with apples and created the table without the filler column so that it matches the covering index you will see that its pretty much the same and the CPU and time almost identical. The clustered index will have slightly more pages but not to the extent that you show in your article.

No, the logic’s not flawed, that’s the whole point.

The clustered index is the largest index on the table (because it is the entire table), any nonclustered is going to be smaller and hence a covering nonclustered index is going to be more efficient (unless it includes the entire table)

But then you can’t compare the two,they both have there place in range scan’s and you cant say one is better than the other, its very situational.

Sure I can compare them. Why not?

The assertion that I’m testing, as listed at the beginning, is that ‘The clustered index is the best index for range queries’. That’s easily testable as I have done.

There may be times that the clustered index is preferred, that’s fine, but what I was trying to prove is what I did prove, that the clustered index is not the best index for range queries.

Pingback: Speaker of the Month, January 2014 | Home Of The Scary DBA